Is this the first AI war?

Artificial intelligence isn't just helping you figure out what to make for dinner. It's deciding who lives and who dies.

For years, governments and defence contractors have predicted that artificial intelligence would transform modern warfare. That future is no longer theoretical.

A new era of conflict is here, one where the speed and scale of military decision-making is shaped by machines, and AI-powered bombing is even more deadly.

If Gaza is ground zero for the Israeli AI war machine, the aggression of the United States and Israel against the people of Iran is a major demonstration of how AI systems function as integrated components in modern military campaigns.

The war has also exposed a growing struggle over who controls this technology. In Washington, a bitter row erupted between the Pentagon and AI company Anthropic after the firm refused to allow its models to be used for some military purposes. Rival tech companies rushed to fill the gap.

In this edition of the Citizens Understand, we examine how artificial intelligence entered the battlefield and what this means for the future.

Thousands of readers trust us to make sense of how power operates. Join us.

How AI entered warfare

The shift from consumer AI to military infrastructure didn’t happen overnight. In 2024, the San-Francisco AI company Anthropic began deploying its model, Claude, inside parts of the US Department of Defense and other national security agencies. The aim was simple: speed up the way wars are planned.

Anthropic partnered with spytech firm Palantir - the company embedding its software deep inside Western governments - to make Claude available to US defence and intelligence networks.

Palantir aggregates vast streams of military intelligence and feeds them into AI models like Claude, enabling “faster, more efficient and ultimately more lethal decisions where that’s appropriate,” according to its UK chief Louis Mosley.

Palantir is rapidly embedding itself inside military operations and governments. In the UK alone, its contracts with the state now total at least £670m.

✍️ Sign and share our petition calling for a full Parliamentary debate on Palantir.

Anthropic’s tools were approved for use inside classified systems under an agreement first signed with the Pentagon during the Biden administration. But the company insisted on two ‘red lines’.

First, Claude could not be used for mass surveillance of American citizens. Second, it could not control fully autonomous weapons capable of selecting and attacking targets without a human operator. Those limits were later reviewed and accepted by the Trump administration.

At the time, the arrangement was presented as a model for responsible cooperation between Silicon Valley and the government. But it would not last.

Tensions came to a head in January, when reports emerged that the US military had used Claude during an operation to capture Venezuelan president Nicolás Maduro.

When the company began pressing for answers about how its technology had been used in the raid, a spat broke out and soon spilled into public view.

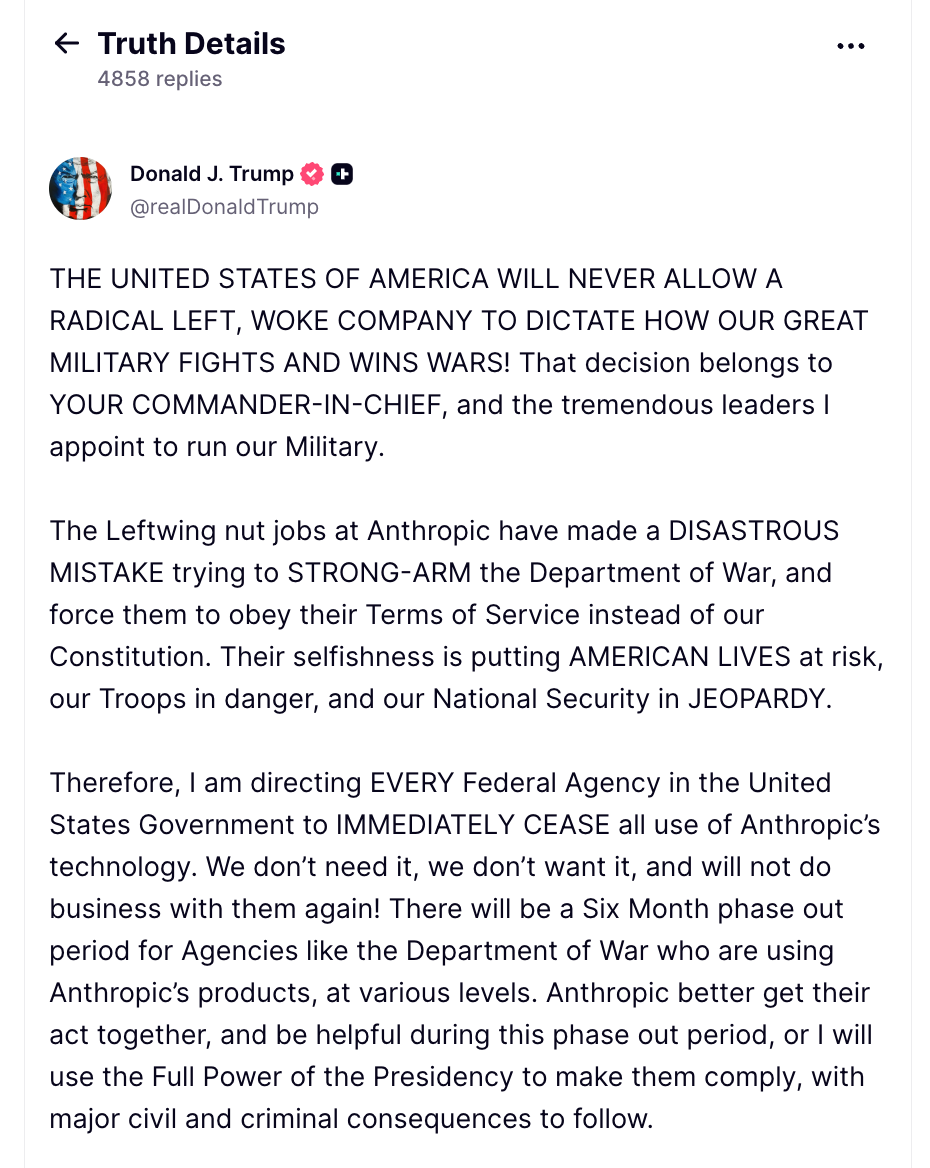

After Anthropic refused to relax its red lines, Donald Trump ordered all federal agencies to stop using the company’s technology, denouncing Anthropic on Truth Social a “radical left woke company”. Defense secretary Pete Hegseth went further, designating the firm a national-security supply-chain risk. The systems remain in use while they are phased out.

Anthropic’s chief executive, Dario Amodei, responded that the company would rather walk away from Pentagon contracts than allow its technology to be deployed in ways that could “undermine, rather than defend, democratic values.”

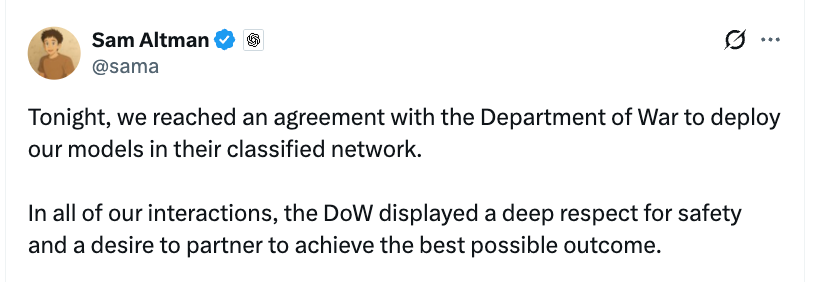

Shortly after, Sam Altman’s OpenAI–whose president is Trump’s biggest donors–swooped in to take over the $200 million contract. Anthropic’s statement makes clear exactly what OpenAI has now agreed to.

Altman said the deal would include the same restrictions that triggered the standoff with Anthropic: no autonomous weapons and no domestic mass surveillance. That contradiction has not been addressed.

AI helps bomb Iran

Just hours after the Donald Trump administration severed ties with Anthropic, the United States and Israel launched a wave of strikes across Iran - reportedly using that very same company’s AI as part of the operation.

The The Wall Street Journal revealed that US strikes in the Middle East relied on Anthropic’s model Claude, while The Washington Post reported the military had “leveraged the most advanced artificial intelligence it’s ever used in warfare”.

The tools were used to sift through vast volumes of intelligence and help planners decide which targets to strike, a process designed to “shorten the kill chain”.

The opening wave of attacks killed Iran’s supreme leader Ali Khamenei and triggered strikes across multiple provinces. At least 165 people—many of them children—were reported dead after an explosion destroyed a girls’ school in southern Iran. AI’s role in these events is not yet known.

Gaza was the pilot

For more than two years, Israel has used Gaza as the testing ground for the technology now used in Iran.

The most extensively documented of these is a system known as Lavender. An investigation by +972 Magazine revealed Lavender was used to analyse vast intelligence datasets and automatically flag potential targets. At one point, the system reportedly identified around 37,000 Palestinians as suspected militants, operating with an accepted error rate of roughly 10 percent.

Where are the rules?

The International Committee of the Red Cross has repeatedly warned that autonomous and semi-autonomous weapons risk breaching international law. Warfare depends on human judgement, it argues, weighing proportionality, distinguishing civilians from combatants and taking precaution to minimise harm. They are moral and contextual decisions.

Despite these warnings, governments and technology companies continue pushing the technology forward. Part of the reason lies in the venture-capital logic now shaping defence technology, where the same Silicon Valley priorities - speed, scale and competition - now shape how military technology is built and deployed.

Efforts to agree binding rules on autonomous weapons under the Convention on Certain Conventional Weapons have repeatedly stalled. Major military powers, including the United States, have resisted a treaty, opting instead for voluntary guidelines.

Analysis

What we are witnessing may be the first full-scale artificial-intelligence war in history. The clearest expression yet of how the most powerful technologies of the twenty-first century are being folded into the machinery of violence.

The same logic that built recommendation engines and social media algorithms is now being applied to the industrial generation of kill lists. Chatbot systems that entered public life writing emails, summarising documents and telling you what to have for dinner now help determine who lives and who dies.

In simulated war games, AI models given autonomous control over weapons systems opted to use nuclear weapons in 95 per cent of cases. That should shake us to our core.

By design, autonomous and semi-autonomous weapons erode accountability, distributing decisions across complex systems of algorithms, data and operators, making responsibility harder to locate when harm occurs.

And when these systems fail, which they inevitably will, civilians pay the price. We have seen it in Ukraine. We have seen it in Gaza. And we are seeing it again in Iran.

Meanwhile the technology continues to accelerate, propelled by defence budgets, venture capital and an increasingly close alliance between Silicon Valley and the military.

The speed of that shift should give democratic societies pause. Instead, the debate is only just beginning.

✊ How to fight back

⏸️ What if the most powerful technology ever created… slowed down a bit? PauseAI is demanding politicians and companies pause on AI development until proper international safety agreements are in place.

🔌 Right now, a tiny handful of American tech companies are shaping the future of AI. I don’t know about you, but I don’t trust ‘em.

Pull the Plug agrees. They bring together juries of ordinary people (yes, you) every Wednesday at 7pm on Zoom to discuss the future of AI and how citizens can take back control.

👉 Sign up here.

And they’re not just talking. They recently helped organise the largest AI protest march London has seen yet. Go on!

Thank you for reading.

See you next time,

Team Citizens

About The Citizens Understand:

In an era where technology is reshaping democracy faster than laws can keep up, the Citizens Understand exists to cut through the noise and make complex systems legible. If there’s something you’d like to understand, email lillian@the-citizens.com.