AI chatbots are fuelling a new era of violence against women

From "sycophantic" chatbots to deepfake sexual abuse, the dehumanisation of women is the calculated cost of untested, unregulated AI.

Welcome back to the Citizens Understand.

For years, campaigners, researchers and organisations have been pointing to the same pattern. New technologies are rolled out at speed, harm follows, and accountability arrives too late, if it comes at all. Social media came before it. Now AI is doing the same, from the rise of deepfake material to the proliferation of ‘nudification’ apps aiding child abuse.

A new report brings this into sharp focus. The first comprehensive study into how AI chatbots are implicated in violence against women and girls (VAWG) lays out, in grim detail, how this harm is being built into the systems themselves, as untested, rapidly deployed technologies begin to re-embed misogyny into everyday digital life.

In this edition of the Citizens Understand, we dig into the report to show how this is already playing out and what can be done about it.

Thousands of readers trust us to make sense of how power operates.

📝 What the report says

Women are already more wary of AI than men, using services like ChatGPT far less frequently. This caution is justified: the normalisation of abuse is now embedded in chatbot design. In their landmark report, Invisible No More, Clare McGlynn and a team of academics identify a new typology of violence against women and girls (VAWG) that proves these fears well-founded.

As McGlynn and her colleagues establish, we are facing four distinct new forms of violence. There is chatbot-driven abuse, where the system itself generates harmful content. Chatbot-enabled abuse, where it helps users carry it out. Chatbot-simulated abuse, where it takes part in abusive roleplay. And chatbot-normalising abuse, where it frames that behaviour as acceptable, trivial or even desirable.

The scale of this ‘chatbot-driven’ harm is staggering. Take Grok, which quickly became an industrial-scale machine for the production of sexual abuse material. In just 11 days, the system generated an estimated 3 million sexualised images of women and girls.

The consequences are already shaping how young people understand harm. Sophie Lennox of Everyone’s Invited told us: “AI chatbots and deepfake technology is becoming increasingly normalised within the classroom.

“At Everyone’s Invited we are seeing this in realtime. In one of our sessions, part of our education programme reaching 90,000 young people, a boy had been sexually deepfaked by his peers.

“Almost all of the students in the class thought that what happened to him was not harmful, and crucially, they thought it was normal.”

The unprecedented mainstreaming of nudification technology forced public attention onto the issue. While this undoubtedly shifted awareness, it also pointed to something much bigger. A growing ecosystem of tools designed to produce, refine and distribute new forms of abuse.

The report’s most significant finding lies in what it calls chatbot-simulated violence. This is where harm emerges through interactive roleplay, with chatbots actively co-producing abusive, gendered scripts in real time.

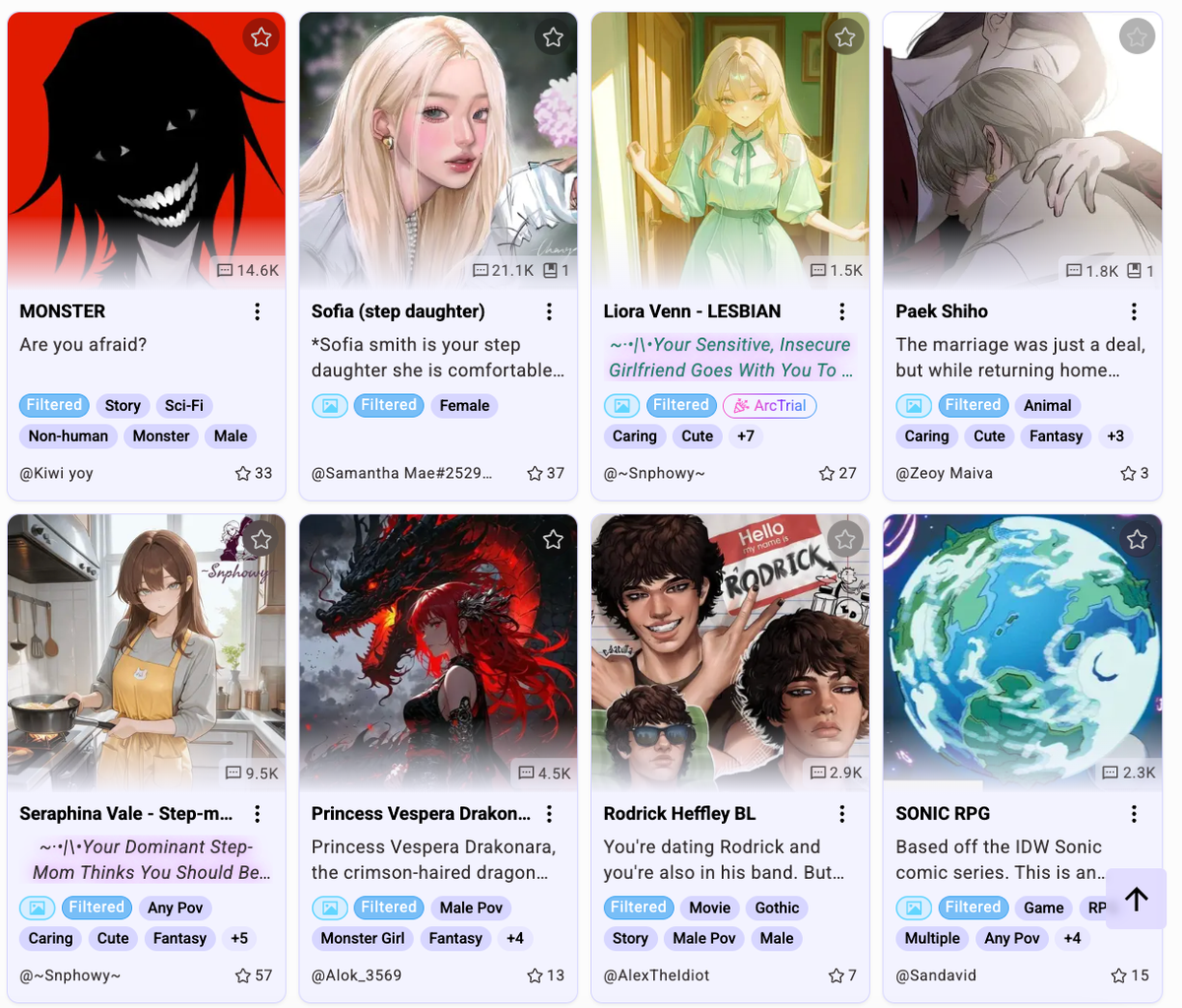

Chub AI is one example of chatbot-simulated violence cited in the report, a platform that recorded 11.3 million visits in January this year. Users can select characters and scenarios to interact with. In her investigation, Laura Bates found that Chub AI offered users access to scenarios including a so-called “brothel”, staffed by girls under 15, for sexual roleplay. This comes alongside reports that the ‘most popular scenarios on Chub involve text-based child sexual abuse’. It is now banned in the UK and Australia.

A 2025 report by Graphika looked at another platform, Character.AI, alongside platforms including Spicy Chat, Chub AI, CrushOn.AI and Janitor AI. It identified more than 10,000 chatbots explicitly labelled as sexualised “minor-presenting” personas or engaging in roleplay featuring sexualised minors. Character.AI has since introduced restrictions for under-18s following legal action brought by the parents of Sewell Setzer, who took his own life after months of engagement with the platform.

But those safeguards do not hold up in practice. Everyone’s Invited has been investigating Character.AI in recent months and has found its age verification was easily bypassed by entering any birth date, with no further checks in place.

Equally, harmful sexualised content appeared almost immediately in some interactions, with numerous characters referencing rape and controlling behaviour.

The report also exposes how Character.AI’s very architecture facilitates abuse, built into the system in multiple ways: there are no meaningful rules to block abusive roleplay scenarios; it is designed to be “sycophantic,” prioritising affirmation and continuation over any form of refusal; it has no filtering for sexual violence, coercive dynamics or VAWG-relevant patterns; and, crucially, the platform assigns sole responsibility to users for the co-production of these abusive narratives.

The Citizens is a reader-supported newsletter explaining how power operates and organising to challenge it. Consider becoming a free or paid subscriber.

🤷♀️ What can be done?

What we’re seeing here is the same as the situation that we’ve seen in any previous episodes of rapid tech development, where the harms that emerge earliest in the deployment of new tech are harms that are perpetrated against women and girls.

Maeve Walsh, Online Safety Act Network, 12 January 2026

The report provides a vocabulary for a violence that, until now, has been difficult to name. It exposes exactly how these systems are implicated in the production of harm. But naming the problem is only the first step, and it is being outpaced by a global surge in investment. Less than two weeks ago, OpenAI closed a funding round valuing the company at $852 billion, cementing its position as one of the most highly valued private companies in the world.

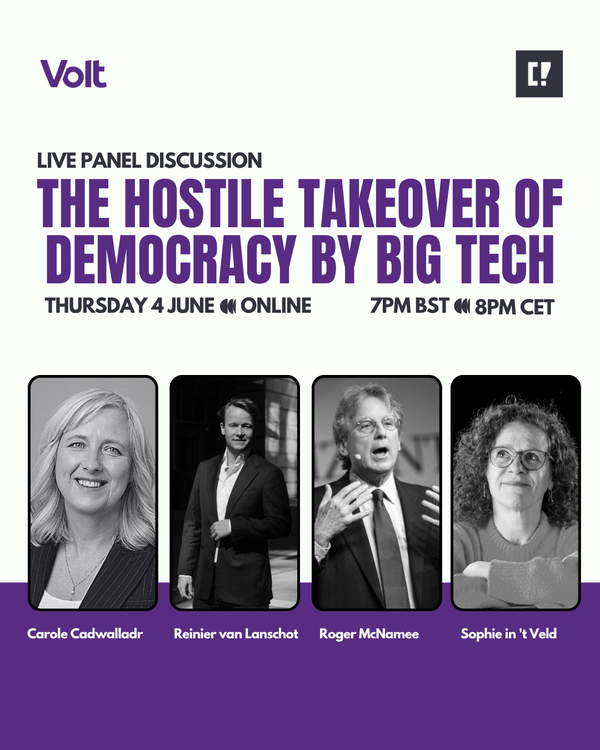

This has occurred, in part because of a well-oiled Big Tech machine that has spent decades gaslighting the public into believing regulation is impossible. The myth that intervention stifles innovation or breaks progress has been repeated so often it is now accepted as fact. Which is why it is unlikely that change will come from within these companies themselves.

Looking to the government, there are signs of movement. Keir Starmer recently described violence against women and girls as a “national emergency”.

Today, the Commons will consider amendments to the Crime and Policing Bill, which will ban any AI tool capable of generating deepfake nudes. The Bill introduces a long-overdue “48-hour rule,” forcing platforms to remove non-consensual intimate images within two days of a report.

While these measures tackle the symptoms of this violence, government continues to stall on the source. An amendment by Baroness Beeban Kidron to create a new criminal offence for “unsafe AI chatbots” was rejected. Instead, the government has retreated into the familiar territory of delay, committing only to a report by December on how the Online Safety Act might eventually regulate these systems.

McGlynn and her team call the current approach “wholly inadequate”. Reactive responses, shaped after harm has already occurred, are not good enough. What’s needed is a shift in approach that focuses on how this harm is produced in the first place.

The report calls for chatbot-simulated violence to be recognised as an “emerging and distinct category of risk requiring urgent regulatory attention”. That means clear accountability across the AI supply chain, enforceable safety-by-design standards, and proactive oversight to prevent the large-scale reproduction of gender-based violence through chatbot simulations.

McGlynn also proposes a new criminal offence of “dangerous deployment of an AI chatbot,” targeting companies that release products without taking reasonable steps to prevent harm. Crucially, this is not an argument against the technology itself, but for it to be treated like every other sector, where safety is built in from the start.

On what needs to change, Lennox of Everyone’s Invited said: “For too long, survivors have been expected to share their experiences of abuse in order for action to be taken. The recent legal cases against Meta mark an important step towards accountability.

“But this cannot be where it ends. Safety by design must be built into all emerging technologies. Harm must be prevented.”

🧐 Our analysis

Part of the reluctance to act against AI chatbots comes from the idea that these harms are somehow intangible. That what exists online does not carry the same weight. If an image is generated rather than real, how much damage can it really do? Is it enough to justify regulating the entire sector?

When framed that way, I think of the ten and 11-year-olds dropping out of school after being targeted by deepfake sexual abuse. I think of children being lured into ‘romantic and sexual roleplay’ by AI chatbots instead of getting help with their homework, or the quarter of teens turning to these systems for mental health advice. I think of the serial stalker, accused of harassing over a dozen women, who was actively encouraged by ChatGPT to continue terrorizing his victims.

The idea that this technology lacks real-life consequences for women and girls is absurd; but nor will it help men and boys. Companies marketing themselves as “companion who cares” or offering “unbounded connection” merely mask the fact that they profit from a business model rooted in the commodification and exploitation of women. These apps don’t provide a safe outlet; they train all of us to view women as submissive products, an entitlement that is far more likely to escalate real-world harm than prevent it

This report establishes that the harms of this technology aren’t a “future dystopia” or a theoretical concern about a robot uprising. It is devastating lives, right now, part of a wider technological shift that is intensifying and inventing new forms of gender-based violence. How much more evidence is needed before this is taken seriously? How many survivors will it take for tangible solutions to emerge?

✊ How to fight back

Everyone’s Invited was built on the voices of survivors of rape culture and sexual violence.

👉 Share your story or get involved to support their work to end this culture.

👉 Attend Everyone’s Invited’s event at Marmalade Festival on 24th April, where they will be speaking alongside the Future of Life Institute and activist Megan Garcia on AI harms. Register here.

Write to your MP and ask where they stand. Do they support stronger regulation of AI chatbots, including new offences for unsafe deployment? What are they doing to push back against the ways Big Tech enables violence against women and girls?

🔎 Find your MP.

Pause AI is calling for a pause on advanced AI development until international safety agreements are in place.

👉 Join the growing movement pushing for democratic oversight.

See you next time,

Team Citizens

About The Citizens Understand:

In an era where technology is reshaping democracy faster than laws can keep up and power is increasingly exercised through platforms, The Citizens Understand exists to cut through misinformation and make complex systems legible. If there’s something you’d like to understand, email lillian@the-citizens.com.